Tag: Python

-

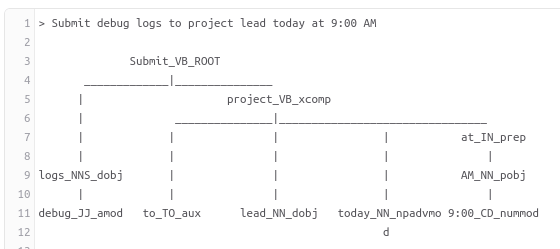

Dependency Parsing in NLP

Syntactic Parsing or Dependency Parsing is the task of recognizing a sentence and assigning a syntactic structure to it. The most widely used syntactic structure is the parse tree which can be generated using some parsing algorithms.

-

A Cognitive study of Lexicons in Natural Language Processing.

A word in any language is made of a root or stem word and an affix. These affixes are usually governed by some rules called orthographic rules. These orthographic rules define the spelling rules for a word composition in Morphological Parsing phase.

-

Setting up Natural Language Processing Environment with Python

In this blog post, I will be discussing all the tools of Natural Language Processing pertaining to Linux environment, although most of them would also apply to Windows and Mac. So, let’s get started with some prerequisites.We will use Python’s Pip package installer in order to install various python modules. $ sudo apt install python-pip…